Helen Fan is one of those people you follow on LinkedIn not because of their job title, but because of what they're actually doing. When we spoke, she was 55 days into a 100-day experiment building an AI-native law firm from scratch: two AI agents, live multi-agent communication, and a framework she created called the Legal AI Value Stack. She has the scars to show for it.

This conversation goes deep into what's actually hard about agents, why Big Law has a structural problem that enthusiasm alone can't fix, and where to start if you've done nothing yet.

Listen Here:

Watch here:

In this episode, we discuss:

- Why Helen programs her AI agents to argue with each other — and what the "argument report" reveals about where AI actually breaks down

- The five-level Legal AI Value Stack, and why most legal AI companies are stuck at level one even when they think they're not

- Why Big Law's billable hour model creates a structural barrier that no adoption strategy can fully get around

- The security guardrail problem in multi-agent systems — why closing one loophole just opens another

- Why avoiding AI for compliance reasons is not a safety strategy — and what the orchestration layer actually looks like in practice

My biggest takeaways

Make your agents argue to identify novel insights

Helen built her law firm with two agents. Morgan is the senior associate who handles strategy, client management, email screening, and breaks complex problems into sub-tasks for Cleo. Cleo is the junior associate who works on legal research, case law, and first drafts. What makes the setup unusual is that Helen deliberately designed them to disagree.

When Cleo challenges Morgan's reasoning, the system generates what Helen calls an argument report. It is a structured summary of exactly where the two agents differ in their views of the problem. Helen uses it to direct her own review and determine which points actually need a human in the loop.

Most people treat AI disagreement as a failure state. Helen made it the product.

"I deliberately make them argue. Because only in this way can you see the value of the multi-agent platforms. You can give them different roles — for example, a business advisor and a legal advisor — and see the common ground between the legal and business perspective."

The argument report surfaces something a single agent would never show you: the blind spots.

When two agents consistently disagree on the same point, it tells you something about the limits of the model, not just the task. That is information you cannot get any other way.

Most legal AI companies are at level one and don't know it

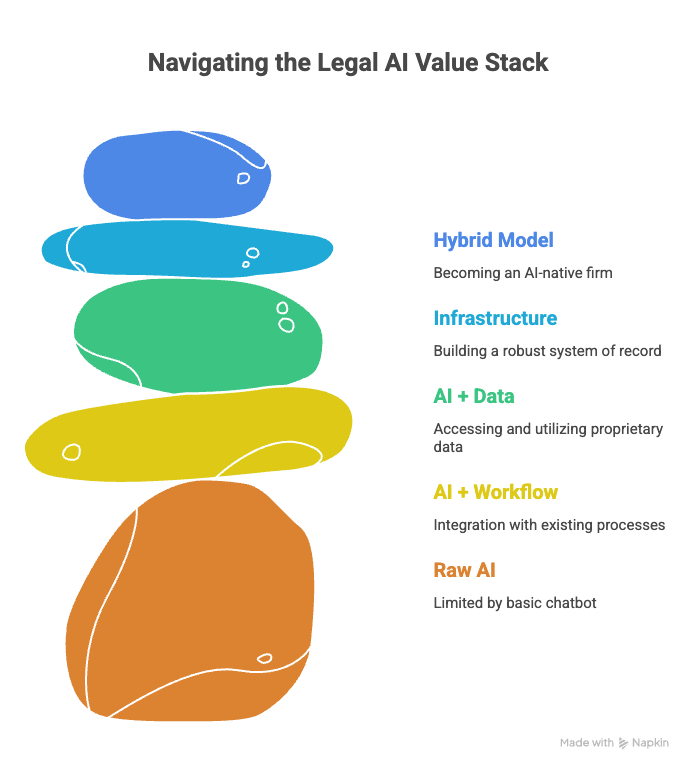

Helen's Legal AI Value Stack has five levels. Level one is raw AI capability — using AI as a chatbot, even with a RAG layer on top. If you are still in a chat window asking questions and getting answers, you are at level one.

Level two is AI plus workflow, the point where AI connects to how lawyers actually work, rather than just responding to prompts. Level three is proprietary data. Level four is infrastructure. Level five is the hybrid model: the AI-native firm.

Helen's read is that most legal AI companies sit at level one or level two right now. Word plugin features and standard contract review tools are typical level two products. The problem is that as frontier labs like Anthropic release their own legal plugins and workflow features, the distance between level two and level one starts to compress. What looked like a moat becomes a commodity.

"Everyone wants to be at level three or level four. But level three requires scale. That intelligence only emerges across hundreds or thousands of clients. No single client's usage can produce it."

The companies that can hold level three are those that have earned enough trust to sit atop their clients' core data — contracts, negotiation patterns, and risk preferences accumulated over years. That trust cannot be raised. It cannot be shipped.

Big Law has a structural problem, not just an adoption problem

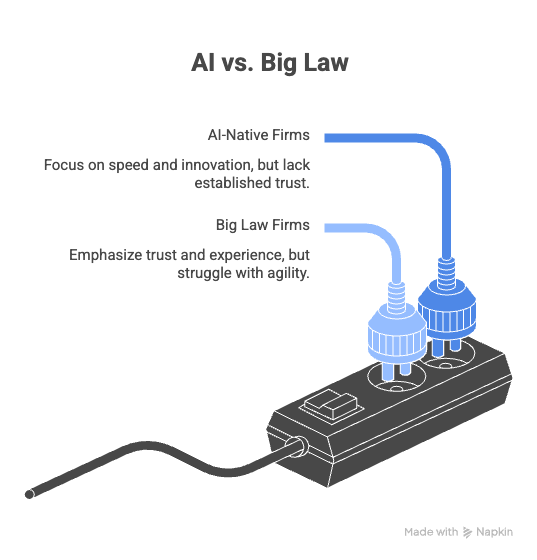

At Stanford Future Law Week, something became clear to Helen: Big Law and AI-native firms are not competing with each other. They are each racing to fix their own weakness.

AI-native firms have speed: they build, deploy, and iterate fast. But they lack deep legal expertise, and clients do not yet trust them.

Big Law has trust, talent pipelines, and long-standing client relationships. But it also has something else.

"Their billable hour model works against efficiency. If AI makes you faster, you bill fewer hours. That's a structural problem."

Beyond the billing model, there is the approval cycle. A committee convenes to evaluate a tool. They deliberate. They finally approve it. By the time the approval lands, the model version they evaluated has already been superseded twice.

AI changes faster than internal governance can track, and no efficiency gain inside Big Law can guarantee relevance when the approval cadence itself is the bottleneck.

Agents are fun and deeply unsafe at the same time

Helen was candid with her earliest realization. "AI agents are fun, deeply unsafe at the same time." She started the experiment assuming that if she gave agents a clear instruction, they would follow it. They don't. In a multi-agent setup, the instability compounds.

The platform has its own limitations. The models have their own failure modes. The chat software (Discord, Telegram, etc) was never designed for this use case. Three sources of breakage are running simultaneously, with no clean way to isolate which one is causing the problem.

"You close one loophole and another one just opens. And those problems come from three directions at once — the platform, the models, and the chat software."

Her advice for anyone who wants to try agents: be patient, build your guardrails before you need them, and do not leave them running overnight. Prompt injection risk is real, and unchecked agent loops can burn through tokens without producing anything useful.

The orchestration layer is where transformation actually begins

Helen's advice for people who have done nothing with AI starts with a mindset shift before it touches tools. Most people who avoid AI cite compliance concerns — privacy, data security, and client confidentiality. Helen points out that both Anthropic and OpenAI publish opt-out provisions in their privacy terms. The compliance picture is more nuanced than a blanket refusal.

"Avoiding AI entirely is not a safety strategy. It's just falling behind."

Once that hurdle is cleared, Helen's starting point is to build an orchestration layer. In a law firm, that means an agent that handles client intake — gathering context, filtering cases, surfacing what deserves a human. In a legal department, it means connecting the business side to legal through agents that can handle low-risk requests directly and escalate higher-risk ones to the right person.

The orchestration layer is where data begins to accumulate. Over time, that data becomes the infrastructure for level three and beyond. What sounds like a starting point is actually the foundation for everything that follows in the value stack.

The reason so many teams buy AI tools and see no transformation is simpler than it sounds: they bolt new tools onto old processes and expect different results. Helen calls it step one, thinking of dressed-up step two.

"The biggest mistake is buying tools without redesigning your workflows. Sometimes people just bought AI onto the old process and wonder why it doesn't transform anything. That's step one thinking, while we need step two thinking."

You redesign the workflow first. Then you automate it. That sequence is not a detail. It is the whole point.

Pair this conversation with the analysis of why Carta entered the law firm game by reading The Law Firm Inside the Platform.

Until next time.

Trusted by almost 1,000 practitioners around the world